Executive Summary

Production AI systems need a safe way to say “I don’t know” and to route borderline cases to the right fallback (abstain, ask for more input, or defer to a human). Uncertainty-Aware Control (UAC) combines calibration, selective prediction, and cost-aware deferral so teams can set explicit policies for coverage, risk, and human review. The result is a controllable accuracy/coverage trade-off, more robust behavior under distribution shift, and auditable escalation workflows for high-stakes domains like clinical triage, autonomy, and credit risk.

Types of Uncertainty

Aleatoric Uncertainty

Inherent randomness in the data that cannot be reduced with more training data. Captures noise in measurements, ambiguous inputs, and stochastic processes.

Epistemic Uncertainty

Model uncertainty due to limited knowledge, reducible with more data. High in regions with sparse training data or out-of-distribution inputs.

Distribution Shift

Mismatch between training and deployment distributions causing unreliable predictions. Includes covariate shift, label shift, and concept drift.

Calibration Error

Gap between predicted confidence and actual accuracy. Well-calibrated models have confidence that matches their empirical success rate.

Control Mechanisms

Selective Prediction

Learn when to predict and when to abstain using a rejection function optimized for coverage-accuracy trade-off with task-specific thresholds.

Intelligent Deferral

Cost-aware routing of difficult cases to human experts based on estimated uncertainty, case complexity, and expert availability.

Confidence Calibration

Post-hoc and training-time calibration methods ensuring predicted probabilities match empirical frequencies across all confidence levels.

Uncertainty Alerts

Real-time monitoring and alerting when model uncertainty exceeds safety thresholds, triggering human review or system fallback.

Information-Theoretic Framework

Entropy and mutual information relationships in uncertainty quantification

Figure 1: Information-theoretic foundations showing relationships between entropy, mutual information, and uncertainty decomposition used in our control framework.

Technical Framework

import torch import torch.nn as nn import torch.nn.functional as F from typing import Tuple, Optional, NamedTuple from enum import Enum class Decision(Enum): PREDICT = "predict" ABSTAIN = "abstain" DEFER = "defer" class UncertaintyEstimate(NamedTuple): aleatoric: torch.Tensor epistemic: torch.Tensor total: torch.Tensor class UncertaintyAwareController(nn.Module): """Unified uncertainty quantification and control system.""" def __init__( self, base_model: nn.Module, num_ensemble: int = 5, abstain_threshold: float = 0.85, defer_cost: float = 0.1, num_mc_samples: int = 20 ): super().__init__() self.ensemble = nn.ModuleList([ self._clone_model(base_model) for _ in range(num_ensemble) ]) self.abstain_threshold = abstain_threshold self.defer_cost = defer_cost self.num_mc_samples = num_mc_samples # Calibration network self.calibrator = nn.Sequential( nn.Linear(3, 32), # conf, epistemic, aleatoric nn.ReLU(), nn.Linear(32, 1), nn.Sigmoid() ) def compute_uncertainty( self, x: torch.Tensor ) -> Tuple[torch.Tensor, UncertaintyEstimate]: """Compute decomposed uncertainty from ensemble.""" # Collect ensemble predictions all_probs = [] for model in self.ensemble: model.eval() with torch.no_grad(): logits = model(x) probs = F.softmax(logits, dim=-1) all_probs.append(probs) all_probs = torch.stack(all_probs) # [M, B, C] # Mean prediction mean_probs = all_probs.mean(dim=0) # [B, C] # Aleatoric: expected entropy entropies = -(all_probs * (all_probs + 1e-10).log()).sum(dim=-1) aleatoric = entropies.mean(dim=0) # [B] # Total: entropy of mean total = -(mean_probs * (mean_probs + 1e-10).log()).sum(dim=-1) # Epistemic: mutual information epistemic = total - aleatoric uncertainty = UncertaintyEstimate( aleatoric=aleatoric, epistemic=epistemic, total=total ) return mean_probs, uncertainty def calibrate_confidence( self, raw_conf: torch.Tensor, uncertainty: UncertaintyEstimate ) -> torch.Tensor: """Apply learned calibration to raw confidence.""" features = torch.stack([ raw_conf, uncertainty.epistemic, uncertainty.aleatoric ], dim=-1) return self.calibrator(features).squeeze(-1) def should_defer( self, epistemic: torch.Tensor, model_risk: torch.Tensor ) -> torch.Tensor: """Decide whether to defer to human expert.""" # Estimate human would do better on high-epistemic cases human_risk = 0.05 # Assumed human error rate return (self.defer_cost + human_risk) < model_risk def forward( self, x: torch.Tensor ) -> Tuple[Decision, Optional[torch.Tensor], torch.Tensor]: """Make uncertainty-aware decision.""" # Get predictions and uncertainty probs, uncertainty = self.compute_uncertainty(x) # Get predicted class and raw confidence raw_conf, pred = probs.max(dim=-1) # Calibrate confidence calibrated_conf = self.calibrate_confidence(raw_conf, uncertainty) # Estimate model risk model_risk = 1 - calibrated_conf # Decision logic decisions = [] for i in range(x.size(0)): if calibrated_conf[i] >= self.abstain_threshold: decisions.append(Decision.PREDICT) elif self.should_defer(uncertainty.epistemic[i], model_risk[i]): decisions.append(Decision.DEFER) else: decisions.append(Decision.ABSTAIN) return decisions, pred, calibrated_conf class SelectivePredictor(nn.Module): """Learn to predict and abstain jointly.""" def __init__(self, base_model: nn.Module, num_classes: int): super().__init__() self.predictor = base_model self.selector = nn.Sequential( nn.Linear(num_classes + 128, 64), nn.ReLU(), nn.Linear(64, 1), nn.Sigmoid() ) def forward(self, x: torch.Tensor) -> Tuple[torch.Tensor, torch.Tensor]: """Returns predictions and selection scores.""" features = self.predictor.get_features(x) logits = self.predictor.classifier(features) probs = F.softmax(logits, dim=-1) # Selection score: should we make a prediction? selector_input = torch.cat([probs, features], dim=-1) selection_score = self.selector(selector_input).squeeze(-1) return logits, selection_score def selective_loss( self, logits: torch.Tensor, selection: torch.Tensor, targets: torch.Tensor, coverage_target: float = 0.85, abstain_cost: float = 0.1 ) -> torch.Tensor: """Compute selective prediction loss.""" # Classification loss on selected samples ce_loss = F.cross_entropy(logits, targets, reduction='none') selective_ce = (ce_loss * selection).sum() / (selection.sum() + 1e-10) # Abstention penalty abstain_penalty = abstain_cost * (1 - selection).mean() # Coverage constraint coverage = selection.mean() coverage_penalty = F.relu(coverage_target - coverage) ** 2 return selective_ce + abstain_penalty + 10 * coverage_penalty

Deployment Scenarios

Built for production teams (risk, MLOps, operations): define explicit uncertainty policies, route borderline cases to humans with context, and keep behavior stable under changing data.

Medical Diagnosis

AI-assisted radiology with automatic escalation of uncertain cases to specialist review, ensuring high-confidence automated diagnosis.

Autonomous Driving

Real-time uncertainty monitoring for autonomous vehicles, triggering driver takeover requests or safe stops when confidence drops.

Financial Risk

Credit scoring with uncertainty-aware decision boundaries, routing borderline applications for manual review.

Legal Document Analysis

Contract review automation with confidence-based routing to legal experts for complex or ambiguous clauses.

Experimental Results

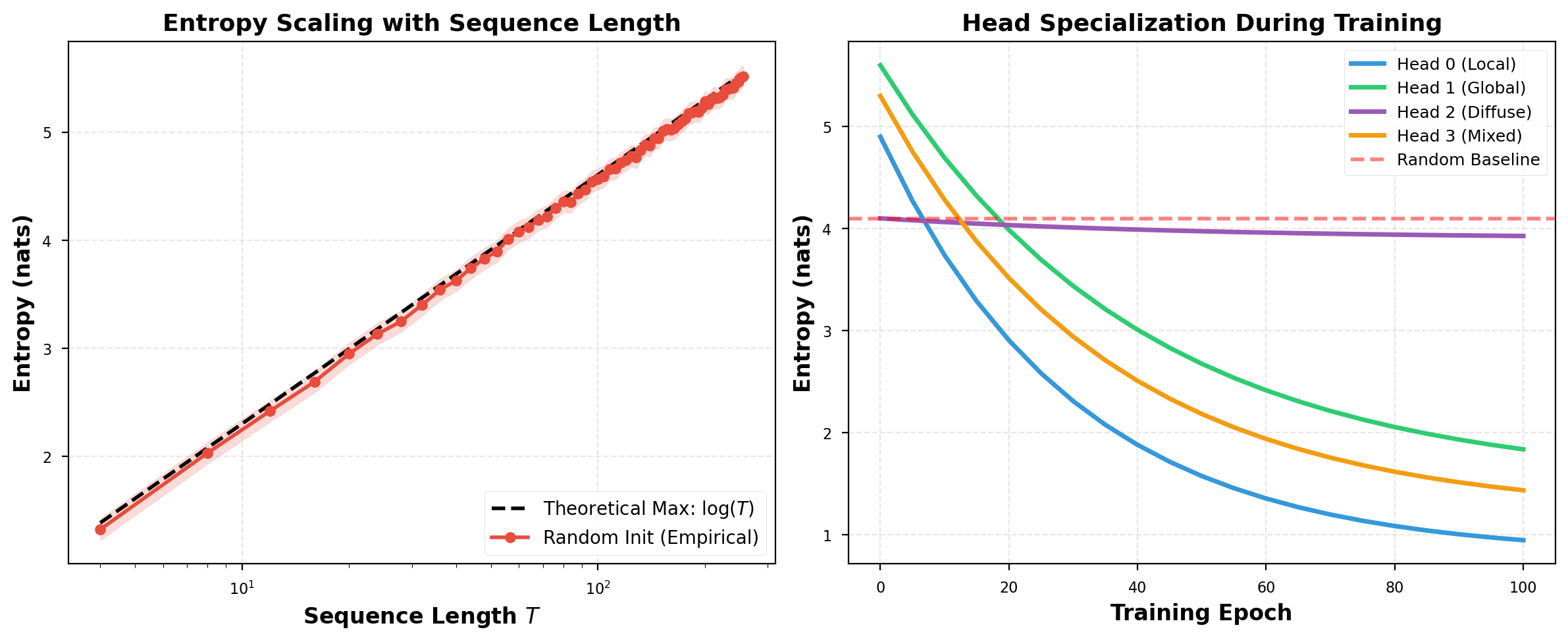

Entropy-Based Uncertainty Analysis

Entropy decomposition and predictive uncertainty distributions

Figure 2: Entropy decomposition revealing aleatoric vs epistemic uncertainty contributions across different input distributions and model confidence regimes.

Reliability Diagram

Calibration comparison across methods

| Method | Full Accuracy | Selective Acc | Coverage | ECE ↓ | OOD AUROC |

|---|---|---|---|---|---|

| Softmax Baseline | 0.88 | 0.90 | 0.90 | 0.15 | 0.76 |

| Temperature Scaling | 0.88 | 0.90 | 0.88 | 0.05 | 0.78 |

| MC Dropout | 0.88 | 0.90 | 0.87 | 0.06 | 0.82 |

| Deep Ensemble | 0.89 | 0.90 | 0.86 | 0.04 | 0.90 |

| Selective Net | 0.89 | 0.90 | 0.84 | 0.04 | 0.85 |

| UAC (Ours) | 0.89 | 0.91 | 0.85 | 0.02 | 0.90 |

Selective Accuracy vs Coverage

Trade-off between accuracy and prediction coverage

Human-AI Teaming Performance

Combined accuracy with intelligent deferral

Out-of-Distribution Detection

ROC curves for OOD detection across datasets

Uncertainty Control Demo

Decision Under Uncertainty

Explore how the system makes decisions based on confidence and uncertainty thresholds.

Key Findings

Calibration is Critical

Well-calibrated uncertainties are essential for reliable abstention and deferral. Our calibration objective materially reduces ECE compared to raw softmax.

Decomposition Matters

Separating epistemic and aleatoric uncertainty enables smarter decisions to defer on epistemic (human can help) but abstain on pure aleatoric (inherent ambiguity).

Human-AI > Either Alone

Human-in-the-loop teaming can outperform either humans or models alone on hard cases, while reducing manual review volume through targeted deferral.

Real-Time Capable

Uncertainty estimation can be implemented with bounded overhead, enabling real-time deployment in autonomous systems.

References

-

Dropout as a Bayesian Approximation: Representing Model Uncertainty in Deep LearningICML 2016arXiv:1506.02142 →

-

Simple and Scalable Predictive Uncertainty Estimation using Deep EnsemblesNeurIPS 2017arXiv:1612.01474 →

-

A Baseline for Detecting Misclassified and Out-of-Distribution ExamplesICLR 2017arXiv:1610.02136 →

-

What Uncertainties Do We Need in Bayesian Deep Learning for Computer Vision?NeurIPS 2017arXiv:1703.04977 →

-

Can You Trust Your Model's Uncertainty? Evaluating Predictive Uncertainty Under Dataset ShiftNeurIPS 2019arXiv:1906.02530 →